DiffuserCam: Lensless Single-exposure 3D Imaging

Nick Antipa*, Grace Kuo*, Reinhard Heckel, Ben Mildenhall, Emrah Bostan, Ren Ng, and Laura Waller

Abstract

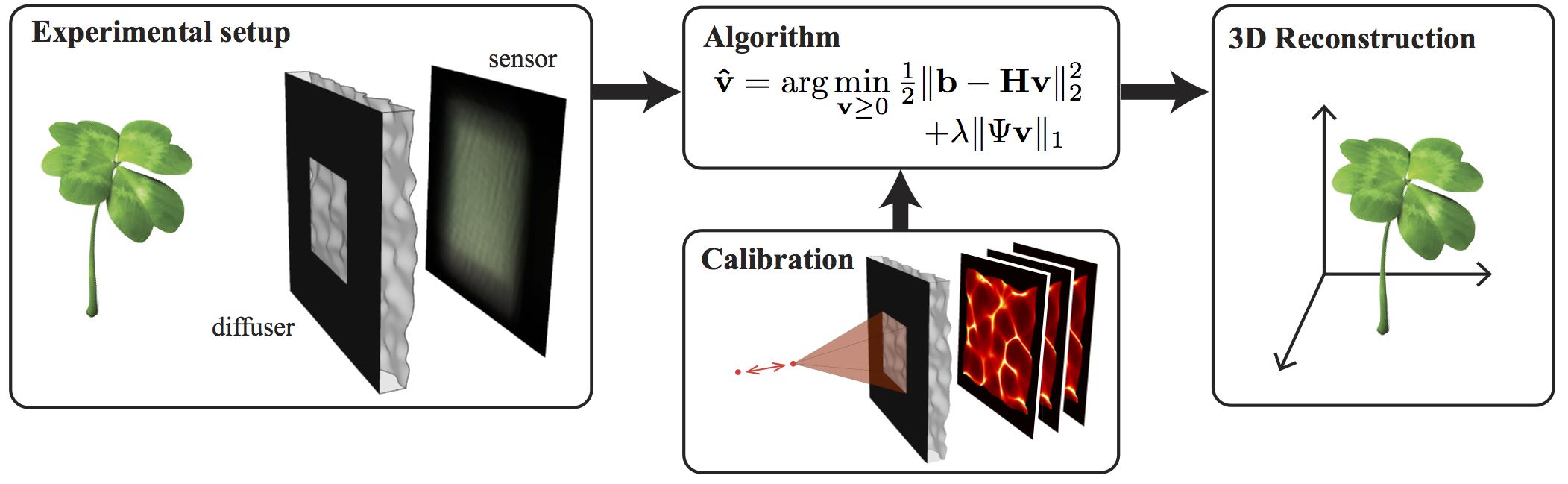

We demonstrate a compact and easy-to-build computational camera for single-shot 3D imaging. Our lensless system consists solely of a diffuser placed in front of a standard image sensor. Every point within the volumetric field-of-view projects a unique pseudorandom pattern of caustics on the sensor. By using a physical approximation and simple calibration scheme, we solve the large-scale inverse problem in a computationally efficient way. The caustic patterns enable compressed sensing, which exploits sparsity in the sample to solve for more 3D voxels than pixels on the 2D sensor. Our 3D reconstruction grid is chosen to match the experimentally measured two-point optical resolution, resulting in 100 million voxels being reconstructed from a single 1.3 megapixel image. However, the effective resolution varies significantly with scene content. Because this effect is common to a wide range of computational cameras, we provide new theory for analyzing resolution in such systems.

Resources

Conference Papers

Grace Kuo*, Nick Antipa*, Ren Ng, and Laura Waller. "DiffuserCam: Diffuser-Based Lensless Cameras." Computational Optical Sensing and Imaging. Optical Society of America, 2017.

Nick Antipa*, Grace Kuo*, Ren Ng, and Laura Waller. "3D DiffuserCam: Single-Shot Compressive Lensless Imaging." Computational Optical Sensing and Imaging. Optical Society of America, 2017.

Grace Kuo, Nick Antipa, Ren Ng, and Laura Waller. "3D Fluorescence Microscopy with DiffuserCam." Computational Optical Sensing and Imaging. Optical Society of America, 2018.

Awards

Best Demo (people's choice) at the International Conference on Computational Photography (ICCP) 2017:

DiffuserCam: A Diffuser Based Lensless Camera

Grace Kuo, Nick Antipa, Shreyas Parthasarathy, Camille Biscarrat, Ben Mildenhall, Ren Ng, and Laura Waller

Extensions

3D Microscopy

Kyrollos Yanny*, Nick Antipa*, William Liberti, Sam Dehaeck,Kristina Monakhova,Fanglin Linda Liu,Konlin Shen, Ren Ng, and Laura Waller. Miniscope3D: optimized single-shot miniature 3D fluorescence microscopy. Light Sci Appl 9, 171 (2020). [pdf] [Project page and code]Grace Kuo, Fanglin Linda Liu, Irene Grossrubatscher, Ren Ng, and Laura Waller, "On-chip fluorescence microscopy with a random microlens diffuser," Opt. Express 28, 8384-8399 (2020) [pdf]

Fanglin Linda Liu, Grace Kuo, Nick Antipa, Kyrollos Yanny, and Laura Waller, "Fourier DiffuserScope: single-shot 3D Fourier light field microscopy with a diffuser," Opt. Express 28, 28969-28986 (2020) [pdf]